-

457 words • 3 min read • Abstract

Five ML Concepts - #29

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #29

References

Concept Reference Neural Collapse Prevalence of Neural Collapse (Papyan et al. 2020) Grokking Grokking: Generalization Beyond Overfitting (Power et al. 2022) SAM Sharpness-Aware Minimization (Foret et al. 2021) Mechanistic Interpretability Transformer Circuits (Anthropic 2021) Self-Training Instability Understanding Self-Training (Wei et al. 2020) Today’s Five

1. Neural Collapse

In overparameterized networks trained to zero loss, class representations converge late in training to a symmetric, maximally separated structure. The last-layer features and classifiers align into a simplex equiangular tight frame.

This geometric phenomenon appears universally across architectures.

Like students settling into evenly spaced seats by the end of class.

2. Grokking

In some tasks, especially small algorithmic ones, models memorize quickly but only later suddenly generalize. The jump from memorization to understanding can happen long after training loss reaches zero.

Weight decay and longer training appear necessary for this phase transition.

Like cramming facts for an exam, then later realizing you truly understand.

3. SAM (Sharpness-Aware Minimization)

Instead of minimizing loss at a single point, SAM minimizes loss under small weight perturbations, finding flatter regions. Flatter minima tend to generalize better than sharp ones.

The optimizer seeks robustness to parameter noise.

Like choosing a wide hilltop instead of balancing on a sharp peak.

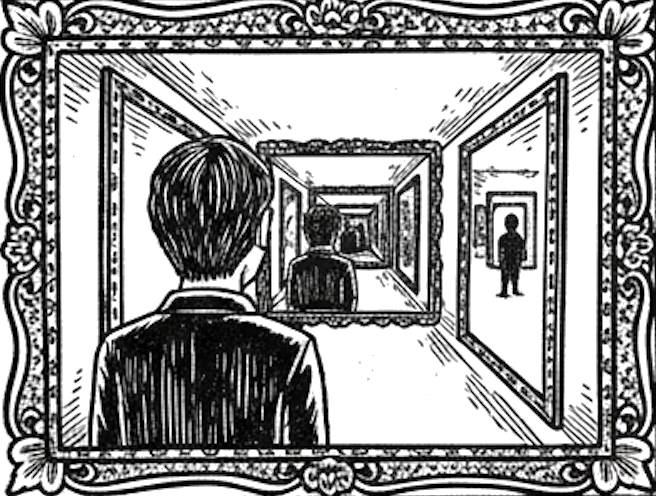

4. Mechanistic Interpretability

Researchers analyze activations and internal circuits to understand how specific computations are implemented inside models. The goal is reverse-engineering neural networks into understandable components.

This reveals attention heads, induction heads, and other interpretable patterns.

Like mapping the wiring of an unknown machine to see how it works.

5. Self-Training Instability

When models train on their own generated data, feedback loops can amplify small errors over time. Each iteration compounds mistakes, causing distributional drift.

Careful filtering and external grounding help mitigate this.

Like copying a copy repeatedly until the meaning drifts.

Quick Reference

Concept One-liner Neural Collapse Late-stage geometric convergence of class representations Grokking Sudden generalization after prolonged memorization SAM Optimizing for flat loss regions under perturbations Mechanistic Interpretability Analyzing internal circuits of neural networks Self-Training Instability Feedback loops that amplify errors in self-generated data

Short, accurate ML explainers. Follow for more.

-

443 words • 3 min read • Abstract

Five ML Concepts - #28

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #28

References

Concept Reference Lottery Ticket Hypothesis The Lottery Ticket Hypothesis (Frankle & Carlin 2019) Sparse Activation Sparsely-Gated Mixture-of-Experts (Shazeer et al. 2017) Conditional Computation Sparsely-Gated MoE + Switch Transformers Inference Parallelism Megatron-LM (Shoeybi et al. 2019) Compute Optimality Chinchilla Scaling Laws (Hoffmann et al. 2022) Today’s Five

1. Lottery Ticket Hypothesis

Large neural networks contain smaller subnetworks that, when trained from the right initialization, achieve similar performance. These “winning tickets” exist before training begins.

The key insight: you can find and train just the winning subnetwork.

Like finding a winning lottery ticket hidden among many losing ones.

2. Sparse Activation

Only a subset of neurons activate for each input, even in models with many parameters. This allows large capacity without using everything at once.

Mixture-of-experts architectures explicitly design for this pattern.

Like a library where only relevant books light up for each query.

3. Conditional Computation

The model dynamically activates only certain components depending on the input. Different inputs route to different experts or pathways.

This improves efficiency and scalability without proportional compute increase.

Like routing patients to the right specialist instead of seeing every doctor.

4. Inference Parallelism

Model execution can be split across multiple devices to reduce latency or increase throughput. Tensor parallelism splits layers; pipeline parallelism splits stages.

Essential for serving large models in production.

Like dividing a puzzle so multiple people work on it simultaneously.

5. Compute Optimality Hypothesis

Empirical scaling laws suggest performance improves when model size, data, and compute are balanced. Adding only one resource may not yield optimal gains.

Chinchilla showed many models were undertrained relative to their size.

Like baking a cake where proportions matter more than just adding extra ingredients.

Quick Reference

Concept One-liner Lottery Ticket Hypothesis Small winning subnetworks hidden in large models Sparse Activation Using only part of a model per input Conditional Computation Dynamically routing inputs for efficiency Inference Parallelism Distributing inference across devices Compute Optimality Balancing model size, data, and compute

Short, accurate ML explainers. Follow for more.

-

894 words • 5 min read • Abstract

How AI Learns Part 7: Designing a Continuous Learning Agent

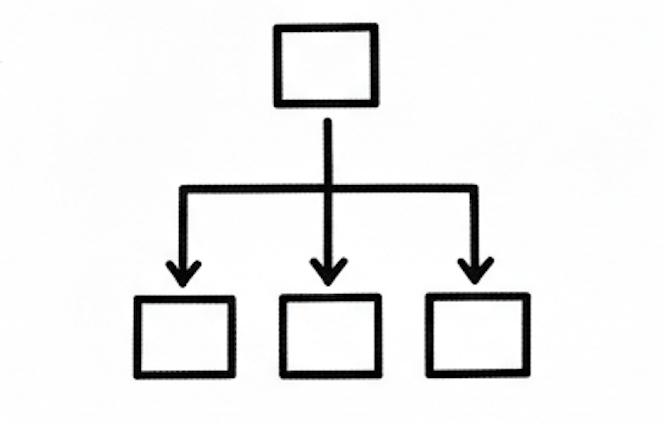

Resource Link Related RLM | Engram | Sleepy Coder The Layered Architecture

Continuous learning is layered coordination. Layer by Layer

Layer 4: Core Weights (Bottom)

The foundation. Trained once, changed rarely.

Aspect Details Contains General reasoning, language, base knowledge Update frequency Months or never Update method Full fine-tune or major consolidation Risk of change High (forgetting, capability shifts) Rule: Don’t touch this unless you have a very good reason.

Layer 3: Adapters (Parameter-Efficient Fine-Tuning (PEFT) / Low-Rank Adaptation (LoRA))

Modular skills that plug into the base.

Aspect Details Contains Task-specific capabilities Update frequency Weekly to monthly Update method Lightweight PEFT training Risk of change Medium (isolated, but validate) Rule: Train adapters for validated, recurring patterns. Version them. Enable rollback.

Layer 2: External Memory

Facts, experiences, and retrieved knowledge.

Aspect Details Contains Documents, logs, structured data Update frequency Continuous Update method Database writes Risk of change Low (doesn’t affect weights) Rule: Store experiences here first. Memory is cheap and safe.

Layer 1: Context Manager (Top)

The RLM-style interface that rebuilds focus each step.

Aspect Details Contains Current context, retrieved data, active state Update frequency Per call Update method Reconstruction from memory + query Risk of change None (ephemeral) Rule: Don’t drag context forward. Rebuild it.

The Feedback Loop

Logging

Capture everything the agent does:

- Prompts received

- Actions taken

- Tool calls made

- Errors encountered

- User signals

This is your training data.

Evaluation

Before any update reaches production:

Check Purpose Retention tests Did old skills degrade? Forward transfer Did new skills improve? Regression suite Known failure cases Safety checks Harmful outputs? Without evaluation, you’re updating blind.

Deployment

Updates should be:

- Modular: Can isolate and rollback

- Versioned: Know what changed when

- Staged: Test before full rollout

- Monitored: Track post-deployment metrics

The Error Flow

Where do errors go?

Error occurs ↓ Log it (immediate) ↓ Store in memory (same day) ↓ Pattern emerges over multiple occurrences ↓ Train adapter update (weekly/monthly) ↓ Validate update (before deployment) ↓ Deploy with rollback capabilityErrors feed into memory first. Only validated, recurring improvements reach adapters. Core weights almost never change.

What This Architecture Achieves

Problem Solution Catastrophic forgetting Core weights frozen; adapters isolated Context rot RLM rebuilds focus each step Hallucination Memory grounds responses Slow adaptation Memory updates continuously Unsafe changes Evaluation before deployment Design Principles

1. Separate Storage from Reasoning

Facts belong in memory. Reasoning belongs in weights. Don’t blur them.

2. Separate Speed from Permanence

Fast learning (memory) is temporary. Slow learning (weights) is permanent. Match the update speed to the desired permanence.

3. Evaluate Before Consolidating

Every update to adapters or weights must be validated. Regressions are silent killers.

4. Enable Rollback

Version everything. If an update causes problems, you must be able to undo it.

5. Log Everything

You cannot improve what you cannot measure. Structured logging is the foundation of continuous learning.

The Big Picture

AI does not learn in one place.

It learns in layers:

- Permanent (weights)

- Modular (adapters)

- External (memory)

- Temporary (context)

Continuous learning is not constant weight updates.

It is careful coordination across time scales.

Continuous learning systems don’t constantly retrain. They carefully consolidate what works.

References

Concept Paper LoRA LoRA: Low-Rank Adaptation (Hu et al. 2021) RAG Retrieval-Augmented Generation (Lewis et al. 2020) RLM Recursive Language Models (Zhou et al. 2024) Share Shared LoRA Subspaces (2025) Engram Engram: Conditional Memory (DeepSeek 2025) Series Summary

Part Key Insight 1. Time Scales Learning happens at different layers and speeds 2. Forgetting vs Rot Different failures need different fixes 3. Weight-Based Change the brain carefully 4. Memory-Based Store facts outside the brain 5. Context & RLM Rebuild focus instead of dragging baggage 6. Continuous Learning Learn in memory, consolidate in weights 7. Full Architecture Layered coordination enables safe improvement

Continuous learning is layered coordination.

-

419 words • 3 min read • Abstract

Five ML Concepts - #27

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #27

References

Concept Reference Elastic Weight Consolidation Overcoming catastrophic forgetting (Kirkpatrick et al. 2017) Replay Buffers Experience Replay for Continual Learning (Rolnick et al. 2019) Parameter Routing Sparsely-Gated Mixture-of-Experts (Shazeer et al. 2017) Memory-Augmented Networks Neural Turing Machines (Graves et al. 2014) Model Editing Editing Large Language Models (Yao et al. 2023) Today’s Five

1. Elastic Weight Consolidation

Adding a penalty that discourages changing parameters important to previous tasks. Importance is estimated using Fisher information from prior training.

This helps models learn new tasks without catastrophic forgetting.

Like protecting well-worn neural pathways while building new ones.

2. Replay Buffers

Storing examples from earlier tasks and mixing them into new training. Past data is replayed alongside current examples during optimization.

This reinforces previous knowledge while learning new data.

Like reviewing old flashcards while studying new material.

3. Parameter Routing

Activating different subsets of model parameters depending on the task or input. Mixture-of-experts and conditional computation route inputs to specialized weights.

Enables specialization without fully separate models.

Like having different experts handle different questions.

4. Memory-Augmented Networks

Adding external memory modules that neural networks can read from and write to. The model learns to store and retrieve information during inference.

Extends beyond purely weight-based memory to explicit storage.

Like giving a calculator access to a notepad.

5. Model Editing

Targeted weight updates to modify specific behaviors without full retraining. Locate and adjust the parameters responsible for particular facts or behaviors.

Allows fast corrections and knowledge updates post-training.

Like editing a specific entry in an encyclopedia instead of rewriting the whole book.

Quick Reference

Concept One-liner Elastic Weight Consolidation Protecting important parameters during new learning Replay Buffers Mixing past examples to prevent forgetting Parameter Routing Activating task-specific parameter subsets Memory-Augmented Networks External memory modules for neural networks Model Editing Targeted weight updates without full retraining

Short, accurate ML explainers. Follow for more.

-

691 words • 4 min read • Abstract

How AI Learns Part 6: Toward Continuous Learning

Resource Link Related Sleepy Coder Part 1 | Sleepy Coder Part 2 The Continuous Learning Loop

Periodic consolidation, not constant updates. The Core Tradeoff

Goal Description Plasticity Learn new things quickly Stability Retain old things reliably You cannot maximize both simultaneously. The art is in the balance.

Approaches to Continuous Learning

1. Replay-Based Methods

Keep (or synthesize) some old data. Periodically retrain on old + new.

How it works:

- Store representative examples from each task

- Mix old data into new training batches

- Periodically consolidate

Recent work: FOREVER adapts replay timing using “model-centric time” (based on optimizer update magnitude) rather than fixed training steps.

Pros Cons Strong retention Storage costs Conceptually simple Privacy concerns Well-understood Data governance complexity 2. Replay-Free Regularization

Constrain weight updates to avoid interference, without storing old data.

Efficient Lifelong Learning Algorithm (ELLA) (Jan 2026): Regularizes updates using subspace de-correlation. Reduces interference while allowing transfer.

Share (Feb 2026): Maintains a single evolving shared low-rank subspace. Integrates new tasks without storing many adapters.

Pros Cons No replay needed Still active research Privacy-friendly Evaluation complexity Constant memory Subtle failure modes 3. Modular Adapters

Keep base model frozen. Train task-specific adapters. Merge or switch as needed.

Evolution:

- Low-Rank Adaptation (LoRA): Individual adapters per task

- Shared LoRA spaces: Adapters share subspace

- Adapter banks: Library of skills to compose

Pros Cons Modular, versioned Adapter proliferation Low forgetting risk Routing complexity Easy rollback Composition challenges 4. Memory-First Learning

Store experiences in external memory. Only consolidate to weights what’s proven stable.

Pattern:

- New information → Memory (fast)

- Validated patterns → Adapters (slow)

- Fundamental capabilities → Weights (rare)

This separates the speed of learning from the permanence of changes.

The Practical Loop

A working continuous learning system:

1. Run agent (with Recursive Language Model (RLM) context management) 2. Collect traces: prompts, tool calls, outcomes, failures 3. Score outcomes: tests, static analysis, user signals 4. Cluster recurring failure patterns 5. Train lightweight updates (LoRA/adapters) 6. Validate retention (did old skills degrade?) 7. Deploy modular update (with rollback capability)This is not real-time learning. It’s periodic consolidation.

Human analogy: Sleep. Process experiences, consolidate important patterns, prune noise.

Time Scales of Update

Frequency What Changes Method Every query Nothing (inference only) - Per session Memory Retrieval-Augmented Generation (RAG)/Engram Daily Adapters (maybe) Lightweight Parameter-Efficient Fine-Tuning (PEFT) Weekly Validated adapters Reviewed updates Monthly Core weights Major consolidation Most systems should:

- Update memory frequently

- Update adapters occasionally

- Update core weights rarely

Evaluation Is Critical

Continuous learning without continuous evaluation is dangerous.

Required:

- Retention tests (what got worse?)

- Forward transfer tests (what got better?)

- Regression detection

- Rollback capability

Without these, you’re flying blind.

References

Concept Paper ELLA Subspace Learning for Lifelong ML (2024) Share Shared LoRA Subspaces (2025) FOREVER Model-Centric Replay (2024) EWC Overcoming Catastrophic Forgetting (Kirkpatrick et al. 2017) Coming Next

In Part 7, we’ll put it all together: designing a practical continuous learning agent with layered architecture, logging, feedback loops, and safety.

Learn often in memory. Consolidate carefully in weights.

-

424 words • 3 min read • Abstract

Five ML Concepts - #26

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #26

References

Concept Reference Data Augmentation A survey on Image Data Augmentation (Shorten & Khoshgoftaar 2019) Caching Strategies Systems engineering practice (no canonical paper) Constitutional AI Constitutional AI: Harmlessness from AI Feedback (Bai et al. 2022) Goodhart’s Law Goodhart’s Law and Machine Learning (Sevilla et al. 2022) Manifold Hypothesis An Introduction to Variational Autoencoders (Kingma & Welling 2019) Today’s Five

1. Data Augmentation

Creating additional training examples using label-preserving transformations. Rotate, flip, crop, or color-shift images without changing what they represent.

Effectively increases dataset size and improves generalization.

Like practicing piano pieces at different tempos to build flexibility.

2. Caching Strategies

Storing previous computation results to reduce repeated work and latency. Cache embeddings, KV states, or frequently requested outputs.

Essential for production inference at scale.

Like keeping frequently used books on your desk instead of the library.

3. Constitutional AI

Training models to follow explicit written principles alongside other alignment methods. The constitution provides clear rules for behavior.

Models critique and revise their own outputs against these principles.

Like giving someone written house rules instead of vague instructions.

4. Goodhart’s Law

When a measure becomes a target, it can stop being a good measure. Optimizing for a proxy metric can diverge from the true objective.

A core challenge in reward modeling and evaluation design.

Like studying only for the test instead of learning the subject.

5. Manifold Hypothesis

The idea that real-world data lies on lower-dimensional structures within high-dimensional space. Images of faces don’t fill all possible pixel combinations.

This structure is what representation learning exploits.

Like faces varying along a few key features instead of every pixel independently.

Quick Reference

Concept One-liner Data Augmentation Expanding training data with transformations Caching Strategies Reducing latency by reusing computation Constitutional AI Training models to follow explicit principles Goodhart’s Law Optimizing metrics distorts objectives Manifold Hypothesis Data lies on lower-dimensional structures

Short, accurate ML explainers. Follow for more.

-

697 words • 4 min read • Abstract

music-pipe-rs: Web Demo and Multi-Instrument Arrangements

Since the initial music-pipe-rs post, the project has grown. There’s now a web demo with playable examples, a new

seqstage for explicit note sequences, and multi-instrument arrangements that work in GarageBand.Resource Link Video YouTube Live Demo music-pipe-rs Samples Source GitHub Previous Unix Pipelines for MIDI Web Demo

The live demo showcases pre-built examples with playable audio:

Tab Style Description Bach Toccata (Organ) Classical Multi-voice church organ with octave doubling and pedal bass Bach Toccata (8-bit) Chiptune Gyruss-inspired arcade version with square wave Bach-esque Algorithmic Procedurally generated baroque-style background music Baroque Chamber Ensemble Six-channel piece with strings, harpsichord, and recorder Each tab shows the pipeline script alongside playable audio. See exactly what commands produce each result.

The seq Stage

The new

seqstage allows explicit note sequences instead of algorithmic generation:seed | seq "C4/4 D4/4 E4/4 F4/4 G4/2" | to-midi --out scale.midNotation:

NOTE/DURATIONwhere duration is in beats. Combine with other stages:seed | seq "D5/4 C#5/8 R/4 B4/4" | transpose --semitones 5 | humanize | to-midi --out melody.midThe

Rrepresents rests. This enables transcribing existing melodies or composing precise phrases.Multi-Instrument Arrangements

The Baroque chamber piece demonstrates six-channel composition:

{ seed 42 | seq "..." --ch 0 --patch 48; # Strings melody seed 42 | seq "..." --ch 1 --patch 6; # Harpsichord seed 42 | seq "..." --ch 2 --patch 74; # Recorder # ... additional voices } | humanize | to-midi --out baroque.midEach instrument gets its own channel and General MIDI patch. The same seed ensures timing coherence across parts.

GarageBand Integration

Import the MIDI files directly into GarageBand:

- Generate arrangement:

./examples/trio-demo.sh - Open GarageBand, create new project

- Drag the

.midfile into the workspace - GarageBand creates tracks for each channel

- Assign software instruments to taste

The demo includes a jazz trio arrangement:

- Piano: Bluesy melody with chords and swing

- Bass: Walking bass line with acoustic bass patch

- Drums: Hi-hat, snare, kick with dynamic variation

All generated from pipeline scripts.

Inspiration

This project was inspired by research into generative music tools and techniques:

References

Topic Link Analog Synthesizers Code Self Study Drum Synthesis JavaScript Drum Synthesis Generative Music Code Self Study Music Projects Software and Hardware FOSS Music Tools Open Source Music Production Eurorack Programming Patch.Init() Tutorial Opusmodus Algorithmic Composition in Lisp The key insight from Opusmodus: algorithmic composition isn’t random music—it’s programmable composition. Motif transformation, rule systems, deterministic generation. music-pipe-rs brings these ideas to Unix pipes.

What’s Next

The pipeline architecture makes extension natural:

- More generators: Markov chains, L-systems, cellular automata

- More transforms: Inversion, retrograde, quantization

- Live mode: Real-time MIDI output with clock sync

Each new capability is just another stage in the pipeline.

Series: Personal Software (Part 5) Previous: music-pipe-rs: Unix Pipelines Disclaimer

You are responsible for how you use generated audio. Ensure you have the appropriate rights and permissions for any commercial or public use. This tool generates MIDI data algorithmically—how you render and distribute the final audio is your responsibility.

Be aware that algorithmic composition can inadvertently produce sequences similar to existing copyrighted works. Whether you use this tool, AI generation, or compose by hand, you must verify that your output doesn’t infringe on existing copyrights before public release or commercial use. Protect yourself legally.

- Generate arrangement:

-

900 words • 5 min read • Abstract

Lucy 20%: Upgrading My Home AI Cluster

Lucy is getting an upgrade. I’m adding an X99 motherboard with an RTX 3090 to expand my AI cluster from 10% to 20% brain power.

Resource Link Video Lucy 20% Upgrade

Previous Lucy 10%

New Hardware: Queenbee

The cluster uses bee-themed naming. The new node is called queenbee:

Component Specification Motherboard X99 CPU Intel Xeon E5-2660 v4 (28 threads) RAM 64 GB DDR4 ECC GPU RTX 3090 (24GB VRAM) Storage 1TB NVMe SSD + 4TB HDD New AI Capabilities

With queenbee online, Lucy gains several new abilities:

Capability Model What It Does Voice Cloning VoxCPM High-quality text-to-speech with voice cloning Text-to-Image FLUX schnell Fast image generation from text prompts Text-to-Video Wan 2.2 Generate video clips from text descriptions Image-to-Video SVD Animate still images into video The Active Cluster

Currently active for AI workloads:

Node Role GPU hive MuseTalk lip-sync 2x P40 (48GB total) queenbee Generative AI workloads RTX 3090 (24GB) Together, they handle the full pipeline: generate images, animate them to video, add lip-synced speech, and produce the final output. See the full apiary inventory below.

Why Local AI?

Running AI locally means:

- Privacy - Data never leaves my network

- No API costs - Unlimited generations after hardware investment

- Customization - Full control over models and parameters

- Learning - Deep understanding of how these systems work

The 24GB of VRAM on the 3090 opens up models that wouldn’t fit on smaller cards. FLUX schnell produces high-quality images in seconds. VoxCPM creates natural-sounding speech that can clone voices from short audio samples.

Bee-Themed Host Names

The full apiary (current and planned nodes):

Host System CPU Cores RAM GPU apiary HPE DL360 G10 1x Xeon Gold 5188 12C/24T 188G - bees HPE DL360 G9 2x E5-2650 v4 24C/48T 128G - brood HPE DL380 G9 2x E5-2680 v4 28C/56T 64G 2x P100-16G colony Supermicro 6028U 2x E5-2680 v3 24C/48T TBD 2x K80-24G drones HPE DL380 G9 2x E5-2620 v4 16C/32T 256G - hive HPE DL380 G9 2x E5-2698 v3 32C/64T 128G 2x P40-24G honeycomb HPE DL180 G9 1x E5-2609 v4 8C/8T TBD - queenbee X99 1x E5-2660 v4 14C/28T 64G RTX 3090-24G swarm HPE DL380 G9 2x E5-2698 v3 32C/64T 374G 2x P100-12G workers HPE DL560 G8 4x E5-4617 v1 TBD 640G TBD Notes: Some nodes pending upgrade or configuration. Workers may upgrade to 4x E5-4657L v2 (48C/96T). Honeycomb needs unbrick. K80 GPUs are old and difficult to configure (limited CUDA version support)—will be replaced with M40 GPUs.

Power and Control

Remote management is essential for a home datacenter. The HPE servers include iLO (Integrated Lights-Out) for out-of-band access to BIOS, diagnostics, monitoring, and power control—even when the OS is down.

Category Technology Purpose Remote Management HPE iLO BIOS access, diagnostics, monitoring, power control IP KVM JetKVM, Sipeed KVM Console access for non-HPE servers (planned) Power Monitoring Kill-A-Watt, clones Per-outlet power consumption tracking Smart Outlets Home Assistant + Zigbee Remote power control, scheduling, automation Additional Circuits Bluetti LFP power stations Extra capacity to run more servers, remote control via BT/WiFi/Zigbee The combination of iLO and smart outlets means I can remotely power-cycle any server, access its console, and monitor power draw—all from my phone or Home Assistant dashboard. The Bluetti stations primarily provide additional circuits so I can run more servers simultaneously—home electrical limits are a real constraint. More LFP power stations will be needed to power Lucy at 100%.

Networking

Each server has 3 or more NICs, segmented by purpose:

Speed Purpose Switch 1G iLO/KVM management 1G switch 2.5G SSH, SCP, Chrome Remote Desktop 2x 2.5G switches 10G fiber Server-to-server data transfer (large models) 10G switch The 10G backbone is essential for moving multi-gigabyte model files between nodes. Loading a 70B parameter model over 1G would take forever—10G fiber makes it practical. The 2.5G network handles interactive work and smaller transfers (using USB NICs where needed), while the 1G management network stays isolated for out-of-band access.

Additional networking notes:

- WiFi 7 for wireless connectivity

- Managed switches with VLANs planned for better network segmentation

- Linux network bonding experiments to increase aggregate transfer rates

- Sneaker net - most servers have hot-swap SAS SSDs and hard drives, so physically moving drives between nodes is sometimes the fastest option for very large transfers

What’s Next

The 20% milestone is just a step. Future upgrades could include:

- Additional GPU nodes for parallel processing

- Larger language models for local inference

- Real-time video generation pipelines

- Integration with more specialized models

The bee hive keeps growing.

Building AI infrastructure one node at a time.

-

631 words • 4 min read • Abstract

How AI Learns Part 5: Context Engineering & Recursive Reasoning

Large context windows are not a complete solution.

As context grows:

- Attention dilutes

- Errors compound

- Reasoning quality degrades

Resource Link Related RLM | ICL Revisited The Context Problem

Transformers have finite attention. With limited attention heads and capacity, the model cannot attend equally to everything. As tokens accumulate:

- Earlier instructions lose influence

- Patterns average toward generic responses

- Multi-step reasoning fails

This is context rot—not forgetting weights, but losing signal in noise.

In-Context Learning (ICL)

The model adapts temporarily via examples in the prompt.

Aspect ICL Updates weights? No Persists across sessions? No Speed Instant Mechanism Activations, not gradients ICL is powerful but ephemeral. It’s working memory, not learning.

Limitation: As context grows, ICL examples compete with other content for attention.

Recursive Language Models (RLM)

Rebuild context each step instead of dragging it forward. RLMs decompose reasoning into multiple passes. Instead of dragging entire context forward:

- Query relevant memory

- Retrieve what’s needed now

- Execute tools

- Evaluate results

- Reconstruct focused context

- Repeat

This treats context as a dynamic environment, not a static blob.

Why RLM Works

Traditional approach:

[System prompt + 50k tokens of history + query]RLM approach:

[System prompt + retrieved relevant context + current query]Each reasoning step starts fresh with focused attention.

Context Engineering Techniques

Technique How It Helps Summarization Compress old context, preserve essentials Chunking Process in segments, aggregate results Retrieval Pull relevant content, not everything Tool offloading Store state externally, query on demand Structured prompts Clear sections, explicit priorities Tool Use as Context Management

Tools aren’t just for actions—they’re for state management.

Instead of keeping everything in context:

- Store in files, databases, or structured formats

- Query when needed

- Return focused results

This converts unbounded context into bounded queries.

The Agent Loop

Modern agents combine these ideas:

while not done: # 1. Assess current state relevant = retrieve_from_memory(query) # 2. Build focused context context = [system_prompt, relevant, current_task] # 3. Reason action = llm(context) # 4. Execute result = execute_tool(action) # 5. Update memory memory.store(result) # 6. Evaluate if goal_achieved(result): done = TrueEach iteration rebuilds context. No rot accumulation.

Test-Time Adaptation

A related technique: temporarily update weights during inference.

Aspect Test-Time Learning Updates weights? Yes, lightly (LoRA) Persists? No (rolled back) Purpose Adapt to input distribution This sits between ICL (no updates) and fine-tuning (permanent updates).

Key Insight

Context is not a static buffer. It’s a dynamic workspace.

Systems that treat context as “append everything” will rot. Systems that actively manage context stay coherent.

References

Concept Paper RLM Recursive Language Models (Zhou et al. 2024) ICL What Can Transformers Learn In-Context? (Garg et al. 2022) Test-Time Training TTT for Language Models (2024) Chain-of-Thought Chain-of-Thought Prompting (Wei et al. 2022) Coming Next

In Part 6, we’ll connect all of this to continuous learning: replay methods, subspace regularization, adapter evolution, and consolidation loops.

Rebuild focus instead of dragging baggage.

-

406 words • 3 min read • Abstract

Five ML Concepts - #25

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #25

References

Concept Reference Label Smoothing Rethinking the Inception Architecture (Szegedy et al. 2015) Miscalibration On Calibration of Modern Neural Networks (Guo et al. 2017) Representation Learning Representation Learning: A Review (Bengio et al. 2013) Adversarial Examples Intriguing properties of neural networks (Szegedy et al. 2013) Double Descent Deep Double Descent (Nakkiran et al. 2019) Today’s Five

1. Label Smoothing

Replacing hard one-hot labels with softened target distributions during training. Instead of 100% confidence in one class, distribute small probability to other classes.

Reduces overconfidence and can improve generalization.

Like allowing small uncertainty instead of absolute certainty.

2. Miscalibration

When predicted confidence does not match observed accuracy. A model that says “90% confident” should be right 90% of the time.

Modern neural networks tend to be overconfident. Temperature scaling can help.

Like a forecast that sounds certain but is often wrong.

3. Representation Learning

Learning useful internal features automatically from raw data. Instead of hand-crafting features, the model discovers what matters.

The foundation of deep learning’s success across domains.

Like detecting edges before recognizing full objects.

4. Adversarial Examples

Inputs modified to cause incorrect predictions. Small, often imperceptible changes can flip model outputs.

A security concern and a window into model vulnerabilities.

Like subtle changes that fool a system without obvious differences.

5. Double Descent

Test error that decreases, increases, then decreases again as model capacity grows. The classical bias-variance tradeoff captures only the first part.

Modern overparameterized models operate in the second descent regime.

Like getting worse before getting better—twice.

Quick Reference

Concept One-liner Label Smoothing Softening targets to reduce overconfidence Miscalibration Confidence not matching accuracy Representation Learning Automatically learning useful features Adversarial Examples Inputs crafted to cause errors Double Descent Test error decreasing twice with model size

Short, accurate ML explainers. Follow for more.

-

627 words • 4 min read • Abstract

How AI Learns Part 4: Memory-Based Learning

Modern AI systems increasingly rely on external memory.

This shifts “learning” away from parameters.

Resource Link Related Engram | Engram Revisited | Multi-hop RAG The Memory Paradigm

Store facts outside the brain. Why External Memory?

Most “learning new facts” should not modify weights.

Weights are for generalization. They encode reasoning patterns, language structure, and capability.

Memory is for storage. It holds specific facts, documents, and experiences.

If you store everything in weights:

- You create interference

- You risk forgetting

- You must retrain

If you store facts in memory:

- No forgetting

- Fast updates

- Survives model upgrades

Retrieval-Augmented Generation (RAG)

Documents are embedded into vectors. At query time:

- Embed the query

- Search the vector database

- Retrieve relevant documents

- Inject into prompt

- Generate grounded response

The model does not need to remember facts internally. It retrieves them on demand.

RAG Benefits

Benefit Description No forgetting External storage, not weights Persistent Survives restarts and model changes Scalable Add documents without retraining Verifiable Can cite sources RAG Challenges

- Retrieval precision (wrong docs = bad answers)

- Latency (search takes time)

- Index maintenance

- Chunk boundaries

Cache-Augmented Generation (CAG)

Instead of retrieving from vector DB, cache previous context or KV states.

Use cases:

- Repeated knowledge tasks

- Multi-turn conversations

- Pre-computed context windows

Benefits over RAG:

- Often faster (no embedding + search)

- More deterministic

- Good for structured repeated workflows

Trade-offs:

- Less flexible

- Cache management complexity

Engram-Style Memory

Recent proposals (e.g., DeepSeek research) introduce conditional memory modules with direct indexing.

Instead of scanning long context or searching vectors:

- Memory slots indexed directly

- O(1) lookup instead of O(n) attention

- Separates static knowledge from dynamic reasoning

The goal: Constant-time memory access that doesn’t scale with context length.

This changes the compute story:

- Don’t waste attention on “known facts”

- Reserve compute for reasoning

- Avoid context rot

Model Editing

A related technique: surgically patch specific facts without full fine-tuning.

Example: The model says “The capital of Australia is Sydney.” You edit the specific association to “Canberra” without retraining.

Pros:

- Targeted fixes

- Fast

Cons:

- Side effects possible

- Consistency not guaranteed

The Key Distinction

Aspect Weight Learning Memory Learning Location Parameters External storage Persistence Model lifetime Storage lifetime Forgetting risk High None Update speed Slow (training) Fast (database) Survives model change? No Yes When to Use What

Situation Approach Need new reasoning capability Weight-based (fine-tune) Need to know new facts Memory-based (RAG) Need domain expertise Weight-based (LoRA) Need to cite sources Memory-based (RAG) Frequently changing data Memory-based (RAG/CAG) References

Concept Paper RAG Retrieval-Augmented Generation (Lewis et al. 2020) Engram Engram: Conditional Memory via Scalable Lookup (DeepSeek 2025) REALM REALM: Retrieval-Augmented Pre-Training (Guu et al. 2020) Model Editing Editing Factual Knowledge (De Cao et al. 2021) Coming Next

In Part 5, we’ll examine context engineering and recursive reasoning: ICL, RLM, and techniques that prevent context rot during inference.

The brain stays stable. The notebook grows.

-

426 words • 3 min read • Abstract

Five ML Concepts - #24

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #24

References

Concept Reference Warmup Accurate, Large Minibatch SGD (Goyal et al. 2017) Data Leakage Leakage in Data Mining (Kaufman et al. 2012) Mode Collapse Generative Adversarial Nets (Goodfellow et al. 2014) Blue/Green Deployment MLOps best practice (no canonical paper) Reward Hacking Concrete Problems in AI Safety (Amodei et al. 2016) Today’s Five

1. Warmup

Gradually increasing the learning rate at the start of training as part of a learning rate schedule. This helps stabilize early training when gradients can be noisy.

Warmup is especially important for large batch training.

Like stretching before a sprint instead of starting at full speed.

2. Data Leakage

When information unavailable at deployment accidentally influences model training. This creates artificially high validation scores that don’t reflect real-world performance.

Common sources include future data, preprocessing on full dataset, or duplicate samples.

Like memorizing test answers instead of learning the material.

3. Mode Collapse

When a generative model produces limited output diversity. The generator learns to produce only a few outputs that fool the discriminator.

A major challenge in GAN training that various architectures attempt to address.

Like a musician who only plays one song no matter the request.

4. Blue/Green Deployment

Maintaining two production environments and switching traffic between them. One serves live traffic while the other is updated and tested.

Enables instant rollback if problems occur.

Like having a backup stage ready so the show never stops.

5. Reward Hacking

When agents exploit reward functions in unintended ways. The agent optimizes the reward signal rather than the intended objective.

A key challenge in reinforcement learning and AI alignment.

Like gaming the grading rubric instead of learning the material.

Quick Reference

Concept One-liner Warmup Gradually increasing learning rate at start Data Leakage Training on unavailable deployment info Mode Collapse Limited generative output variety Blue/Green Deployment Switching between parallel environments Reward Hacking Exploiting reward function flaws

Short, accurate ML explainers. Follow for more.

-

1231 words • 7 min read • Abstract

TBT (5/?): IBM 1130 System Emulator - Experience 1960s Computing

Resource Link Live Demo IBM 1130 System Emulator Source GitHub Video IBM 1130 System Emulator

IBM Docs Functional Characteristics (GA26-5881) More Docs Bitsavers IBM 1130 Collection The System

This isn’t just an assembly emulator—it’s a full system visualization:

Component What It Does Console Panel Authentic indicator lights, toggle switches, speed control Assembler Game Write and execute IBM 1130 code with real-time visualization Keypunch IBM 029 text cards and 1442 object deck visualization Printer IBM 1131 console printer with greenbar paper Console Panel

The console panel recreates the physical operator interface with all indicator light groups documented in IBM’s Functional Characteristics manual.

Register Display (6 rows × 16 positions)

Row Register Bits Shown Purpose 1 IAR 15 Instruction Address Register (program counter) 2 SAR 15 Storage Address Register (memory access) 3 SBR 16 Storage Buffer Register (data word) 4 AFR 16 Arithmetic Factor Register (operand) 5 ACC 16 Accumulator (main arithmetic register) 6 EXT 16 Extension (double-precision, multiply/divide) Right-Side Indicators

Beyond the register displays, the console shows:

- Operation Register (5 bits) - Binary op-code of current instruction

- Format/Tag Indicators - Long instruction format, index register selection

- Cycle Control (T0-T7) - Internal timing pulses for debugging

- Status Lights - Wait, Run, Fetch, Execute, Indirect Address

Control Panel Lights

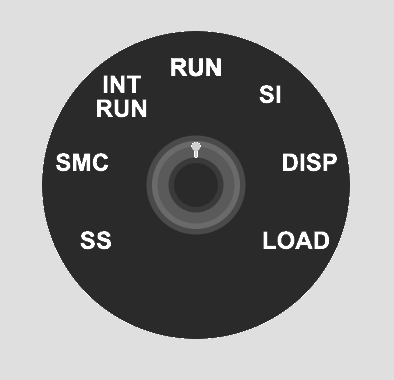

Light Purpose DISK UNLOCK Safe to swap 2315 disk cartridge FILE READY Disk drive up to speed FORMS CHECK Printer out of paper RUN CPU executing instructions PARITY Memory parity error FREEZE Fatal hardware error Operator Controls

- 16-bit toggle switches for manual data entry

- 7-position speed knob - Single Step, SMC, INT RUN, RUN, SI, DISP, LOAD

- Lamp test to verify all indicators function

- Emergency stop button

Assembler Game

Learn the IBM 1130 instruction set interactively:

- Complete instruction set - LD, STO, LDX, STX, A, S, AND, OR, SLA, SRA, BSC, BSI, WAIT

- Memory-mapped index registers - XR1-3 at addresses 1, 2, 3 (historically accurate)

- Step-by-step execution with change highlighting

- Interactive examples covering arithmetic, indexing, shifts

- Progressive challenges with validation

Keypunch

The keypunch simulation supports two card types:

IBM 029 Text Cards

- Hollerith encoding - Standard character-to-punch mapping

- Visual card display - Watch holes appear as you type

- Multi-card decks - Manage multiple cards

IBM 1130 Object Deck (1442 Output)

- Binary card visualization - Machine code punch patterns

- Object deck format - Matches authentic assembler output

- No character printing - Pure binary data cards

The IBM 029 Keypunch produced human-readable text cards. For binary object decks (compiled programs), the IBM 1442 Card Read-Punch would create cards with arbitrary punch patterns that don’t map to characters.

Printer

The IBM 1131 Console Printer simulation:

- Greenbar paper rendering - Authentic line printer output

- Typewriter-style characters - Period-appropriate appearance

- Console output - System messages and program output

Technology

Component Choice Language Rust Target WebAssembly UI Framework Yew Build Tool Trunk Hosting GitHub Pages Planned Enhancements

This is a work in progress. Planned features include:

- Additional challenges (10 total)

- Code save/load functionality

- URL sharing of programs

- Breakpoints and memory watches

- Keyboard shortcuts

- Full 1442 Card Read-Punch integration

IBM Documentation References

Document Description GA26-5881 Functional Characteristics - Console panel details GA26-5717 Operating Procedures - Operator instructions GA26-5914 Physical Planning - System dimensions Bitsavers Collection Complete IBM 1130 documentation archive Project Goals

This is an early proof-of-concept for trying out components that could be extended to produce a more realistic system of devices that could actually run programs. The modular architecture allows each peripheral (console, keypunch, printer) to be developed and refined independently.

A key goal is educational challenges that teach assembly language step by step. The assembler game provides progressive exercises that build understanding from basic load/store operations through arithmetic, indexing, and control flow.

Historical Significance

The IBM 1130 was the first computer for many programmers in the late 1960s and 1970s. Its clean architecture and accessible price point (~$32,000) made it ideal for education.

A Transitional Technology

The IBM 1130 arrived after mechanical calculators and vacuum tube computers, but before dense integrated circuits and microprocessors. This was a unique moment in computing history when machines were complex enough to be powerful, yet simple enough to be fully understood by one person.

The system shipped with complete schematics and diagnostic listings. A field engineer could use an oscilloscope to probe the pins on every transistor. The “integrated circuit” of the era was a small can with a 4×4 pin grid containing just two transistors, mounted on a pluggable card connected via a wire-wrapped backplane. When something failed, you could see it, touch it, and replace it.

Non-Volatile Core Memory

One remarkable feature: magnetic core memory was non-volatile. You could stop the system, power down overnight, come back in the morning, power up, and start your program exactly where it left off—without reloading from cards, tape, or disk.

Each bit was stored as the magnetic polarity of a tiny ferrite ring. No electricity required to maintain state. This made the 1130 remarkably resilient and practical for environments where power wasn’t guaranteed.

Notable fact: The Forth programming language was developed on the IBM 1130 by Charles Moore in the late 1960s.

Personal Experience

In the late 1970s, I worked as an IBM Customer Engineer maintaining a large number of IBM 1130 and 1800 systems used primarily by IBM manufacturing facilities in Kingston, Poughkeepsie, and East Fishkill, New York.

Field service on these machines was hands-on in ways that seem almost unimaginable today. I would often hand-assemble code on paper, converting mnemonics to binary, then enter machine code via the console toggle switches to create a small program. That program’s job? To punch another program onto a card.

I could then insert that punched card into a diagnostic deck to loop on an error condition while I used an oscilloscope and logic schematics to diagnose a failing circuit card. The blinking lights weren’t decoration—they were essential debugging tools that showed exactly what the CPU was doing at each moment.

This emulator recreates that experience: the same indicator lights, the same toggle switches, the same intimate connection between human and machine that made these systems so memorable to work with.

Experience 1960s computing in your browser. Work in progress.

-

649 words • 4 min read • Abstract

How AI Learns Part 3: Weight-Based Learning

Weight-based learning modifies the neural network itself.

It is slow. It is powerful. It is dangerous.

Resource Link Related Sleepy Coder: When Fine-Tuning Fails | 5MLC #3: LoRA The Weight-Based Methods

Weight-based learning modifies the brain itself. Pretraining

This creates the base model.

It encodes language structure, reasoning patterns, and general world knowledge. The process:

- Trains on terabytes of text

- Uses self-supervised learning (predict next token)

- Runs for weeks or months

- Costs millions of dollars

This learning is rarely repeated for cost reasons. The result is a foundation that everything else builds upon.

Fine-Tuning

Fine-tuning adapts models for specific tasks.

Standard Fine-Tuning

Adjust some or all weights using task-specific data.

Pros:

- Can significantly change behavior

- Works with small datasets

Cons:

- Risk of catastrophic forgetting

- Expensive if you modify all weights

- Hard to undo

Supervised Fine-Tuning (SFT)

Train on instruction → response pairs.

This teaches the model to:

- Follow directions

- Produce helpful outputs

- Maintain conversation structure

Risk: Can reduce other capabilities if data is narrow.

Preference Optimization

Instead of “correct answers,” train from comparisons: preferred vs rejected responses.

Method Description Reinforcement Learning from Human Feedback (RLHF) Reward model + reinforcement learning Direct Preference Optimization (DPO) Simpler alternative to RLHF RLAIF AI-generated preferences Pros: Strong style/safety/helpfulness steering

Cons: Can drift (“over-align”), may conflict with domain competence

Parameter-Efficient Fine-Tuning (PEFT)

Instead of changing all weights, inject small trainable modules.

LoRA (Low-Rank Adaptation)

Insert small low-rank matrices into transformer layers. Only train these matrices.

Benefits:

- Faster training: Fewer parameters to update

- Modular: Can swap adapters

- Version control: Different adapters for different tasks

- Lower forgetting risk: Base weights frozen

Other PEFT Methods

- Prompt tuning: Learn soft prompts

- Prefix tuning: Prepend learned vectors

- Adapters: Small bottleneck layers

- IA³: Learned vectors that scale activations

Shared LoRA Subspaces

Multiple tasks share adapter subspaces to reduce interference.

Recent work (ELLA, Share) maintains evolving shared low-rank subspaces that:

- Reduce interference between tasks

- Enable continual learning

- Keep memory constant

Distillation

Train a smaller model using a larger model as teacher.

Aspect Teacher Student Size Large Small Cost High inference Low inference Knowledge Full Compressed Distillation benefits:

- Speeds up inference

- Often improves consistency

- Can reduce hallucination

- Makes deployment cheaper

This is not runtime learning—it’s offline structural learning.

The Alignment Pipeline

Modern models typically go through:

- Pretraining → General competence

- SFT → Follow instructions

- RLHF/DPO → Align with preferences

- Safety fine-tuning → Reduce harmful outputs

Each step modifies weights. Each step risks forgetting previous capabilities.

Key Insight

Fine-tuning changes the brain. RAG changes the notes on the desk.

Weight-based learning is the core capability layer. It’s slow to change, expensive to update, and risky to modify—but it forms the stable foundation that everything else builds upon.

References

Concept Paper LoRA LoRA: Low-Rank Adaptation (Hu et al. 2021) RLHF Training LMs with Human Feedback (Ouyang et al. 2022) DPO Direct Preference Optimization (Rafailov et al. 2023) Distillation Distilling Knowledge in Neural Networks (Hinton et al. 2015) Adapters Parameter-Efficient Transfer Learning (Houlsby et al. 2019) Coming Next

In Part 4, we’ll explore memory-based learning: RAG, CAG, Engram, and other techniques that learn without touching weights.

Change the brain carefully.

-

440 words • 3 min read • Abstract

Five ML Concepts - #23

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #23

References

Concept Reference Emergent Behavior Emergent Abilities of Large Language Models (Wei et al. 2022) Tool Use Toolformer: Language Models Can Teach Themselves to Use Tools (Schick et al. 2023) Loss Surface Sharpness On Large-Batch Training for Deep Learning (Keskar et al. 2016) Learning Rate Schedules SGDR: Stochastic Gradient Descent with Warm Restarts (Loshchilov & Hutter 2016) Canary Deployment MLOps best practice (no canonical paper) Today’s Five

1. Emergent Behavior

Some capabilities appear only when models reach sufficient scale. These behaviors were not directly programmed but arise from learned representations.

Emergence is a key phenomenon in large language models.

Like a child learning words and then suddenly understanding full sentences.

2. Tool Use

Modern AI systems can generate structured commands to call external tools. These include search engines, calculators, or code interpreters.

This extends model capabilities beyond internal knowledge.

Like asking a librarian to look something up instead of guessing.

3. Loss Surface Sharpness

Sharp minima are sensitive to small weight changes. Flatter minima tend to be more robust and often generalize better.

Training methods that find flatter regions can improve test performance.

Like standing on a plateau instead of balancing on a narrow peak.

4. Learning Rate Schedules

Instead of keeping the learning rate constant, training often starts high and gradually reduces it. Schedules like step decay or cosine annealing improve convergence.

Warm restarts can help escape local minima.

Like running fast at first, then slowing down to finish precisely.

5. Canary Deployment

A new model version is rolled out to a small percentage of users first. If problems appear, rollout stops before affecting everyone.

Essential MLOps practice for safe production updates.

Like tasting food before serving it to all your guests.

Quick Reference

Concept One-liner Emergent Behavior Capabilities appearing at sufficient scale Tool Use AI calling external tools Loss Surface Sharpness Flatter minima generalize better Learning Rate Schedules Adjusting learning rate during training Canary Deployment Gradually rolling out new models safely

Short, accurate ML explainers. Follow for more.

-

641 words • 4 min read • Abstract

How AI Learns Part 2: Catastrophic Forgetting vs Context Rot

There are two fundamentally different failure modes in modern AI systems.

They are often confused. They should not be.

Resource Link Related Sleepy Coder: Routing Prevents Forgetting | RLM The Two Failures

Two different failure modes require two different solutions. Catastrophic Forgetting (Weight-Space Failure)

When you fine-tune a model on new tasks, performance on older tasks may degrade.

This happens because gradient descent updates overlap in parameter space. The model does not “know” which weights correspond to which task. It optimizes globally.

Example: Fine-tune a model on medical text. Its ability to write code degrades. The new learning overwrote old capabilities.

Why It Happens

Neural networks store knowledge distributed across many weights. When you update those weights for Task D, you modify the same parameters that encoded Task A. The old knowledge gets overwritten.

This is the stability vs plasticity tradeoff:

- Plasticity: Learn new things quickly

- Stability: Retain old things reliably

You cannot maximize both simultaneously.

Solutions

Method How It Helps Replay Train on old + new data Subspace regularization Constrain weight updates to avoid interference Shared Low-Rank Adaptation (LoRA) spaces Modular updates that don’t overwrite base weights Freezing base weights Keep foundation stable, train adapters only Context Rot (Inference-Time Failure)

Context rot is not weight damage.

It happens when:

- Prompts grow too large

- Earlier instructions get diluted

- Attention spreads thin

- The model begins averaging patterns instead of reasoning

Example: A 50,000 token conversation. The original system prompt is still there, but the model stops following it. Earlier context gets “forgotten” even though it’s technically present.

Why It Happens

Transformer attention is finite. With limited attention heads and capacity, the model cannot attend equally to everything. As context grows, earlier tokens receive less attention weight.

This creates:

- Instruction drift: Original instructions lose influence

- Pattern averaging: The model reverts to generic responses

- Lost coherence: Multi-step reasoning fails

Solutions

Method How It Helps Retrieval-based context Pull relevant passages, not everything Recursive Language Models (RLM) Rebuild context each step Summarization Compress old context Memory indexing Constant-time lookup instead of linear attention Structured tool calls Offload state to external systems The Critical Distinction

Aspect Catastrophic Forgetting Context Rot Where Weights Prompt window When During training During inference Persists? Permanently Session only Analogy Brain damage Working memory overload Why This Matters

If you confuse these failure modes, you apply the wrong fix.

- Forgetting problem? Don’t add more context. Fix your training.

- Context rot problem? Don’t retrain. Fix your context management.

Many “AI agents that forget” discussions conflate both. Modern systems need solutions for both simultaneously.

References

Concept Paper Catastrophic Forgetting Overcoming Catastrophic Forgetting in Neural Networks (Kirkpatrick et al. 2017) Continual Learning Survey A Comprehensive Survey of Continual Learning (Wang et al. 2023) ELLA ELLA: Subspace Learning for Lifelong Machine Learning (2024) Share Share: Shared LoRA Subspaces for Continual Learning (2025) RLM Recursive Language Models (Zhou et al. 2024) Coming Next

In Part 3, we’ll examine weight-based learning in detail: pretraining, fine-tuning, LoRA, alignment methods, and distillation.

Different failures need different fixes.

-

472 words • 3 min read • Abstract

Five ML Concepts - #22

5 machine learning concepts. Under 30 seconds each.

Resource Link Papers Links in References section Video Five ML Concepts #22

References

Concept Reference RSFT Scaling Relationship on Learning Mathematical Reasoning (Yuan et al. 2023) Model Steerability Controllable Generation from Pre-trained Language Models (Zhang et al. 2023) LSTM Long Short-Term Memory (Hochreiter & Schmidhuber 1997) More Data Beats Better Models The Unreasonable Effectiveness of Data (Halevy et al. 2009) System Reliability vs Quality MLOps best practice (no canonical paper) Today’s Five

1. RSFT (Rejection Sampling Fine-Tuning)

A method where many model outputs are generated, weaker ones are filtered out, and the best samples are used for further fine-tuning. It improves output quality without full reinforcement learning.

The model learns from its own best attempts.

Like practicing many attempts and studying only your best ones.

2. Model Steerability

The ability to adjust a model’s behavior through prompts, parameters, or control mechanisms. This allows flexible behavior without retraining.

Steerable models can adapt to different tasks or styles at inference time.

Like steering a car instead of letting it move in a fixed direction.

3. LSTM (Long Short-Term Memory)

A recurrent neural network architecture with gates that regulate memory flow. It was designed to mitigate vanishing gradient problems in sequence modeling.

LSTMs decide what to remember and what to forget at each time step.

Like a notebook where you choose what to keep and what to forget.

4. Why More Data Beats Better Models

In many cases, adding high-quality data improves performance more than small architecture improvements. Data scale often matters as much as model design.

This is sometimes called “the unreasonable effectiveness of data.”

Like practicing with many real conversations instead of perfecting one grammar rule.

5. System Reliability vs Model Quality

A slightly less accurate model that runs reliably can outperform a fragile but slightly better one. Engineers balance uptime, latency, and stability against pure accuracy.

Production systems need both correctness and dependability.

Like choosing a reliable car over a faster one that breaks down often.

Quick Reference

Concept One-liner RSFT Fine-tuning on filtered best outputs Model Steerability Adjusting behavior at inference time LSTM Gated memory for sequence modeling More Data Beats Better Models Data scale trumps architecture tweaks System Reliability vs Quality Balancing accuracy with uptime

Short, accurate ML explainers. Follow for more.

-

1393 words • 7 min read • Abstract

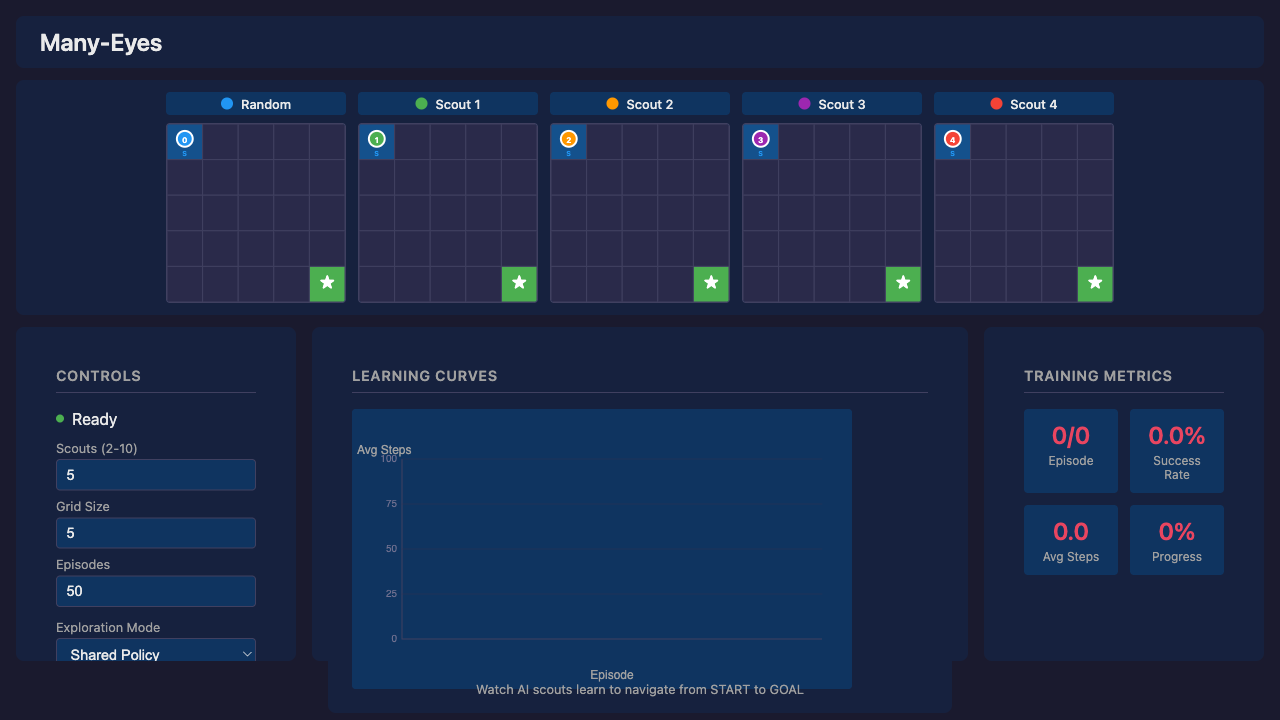

Many-Eyes Learning: Intrinsic Rewards and Diversity

In Part 1, we demonstrated that multiple scouts dramatically improve learning in sparse-reward environments. Five scouts achieved 60% success where a single scout achieved 0%.

This post explores how scouts explore: intrinsic rewards that drive novelty-seeking behavior, and what happens when you mix different exploration strategies.

Resource Link Code many-eyes-learning Part 1 Solving Sparse Rewards with Many Eyes Video Many-Eyes Learning: Watch AI Scouts Explore

Recap: The Many-Eyes Architecture

┌─────────────┐ ┌─────────────┐ ┌─────────────┐ │ Scout 1 │ │ Scout 2 │ │ Scout N │ │ (strategy A)│ │ (strategy B)│ │ (strategy N)│ └──────┬──────┘ └──────┬──────┘ └──────┬──────┘ │ │ │ v v v ┌─────────────────────────────────────────────────┐ │ Experience Buffer │ └─────────────────────────────────────────────────┘ │ v ┌─────────────────────────────────────────────────┐ │ Shared Learner │ └─────────────────────────────────────────────────┘Scouts are information gatherers, not independent learners. They explore with different strategies, pool their discoveries, and a shared learner benefits from the combined experience.

New Scout Strategies

CuriousScout: Count-Based Novelty

IRPO formalizes intrinsic rewards as the mechanism that drives scout exploration. CuriousScout implements count-based curiosity:

class CuriousScout(Scout): def __init__(self, bonus_scale: float = 1.0): self.state_counts = defaultdict(int) self.bonus_scale = bonus_scale def intrinsic_reward(self, state): count = self.state_counts[state] return self.bonus_scale / sqrt(count + 1)How it works:

- Track how many times each state has been visited

- Reward =

bonus_scale / √(count + 1) - Novel states get high rewards; familiar states get diminishing returns

The intuition: A curious scout is drawn to unexplored territory. The first visit to a state is exciting (reward = 1.0). The fourth visit is mundane (reward = 0.5). This creates natural pressure to explore widely.

OptimisticScout: Optimism Under Uncertainty

A different philosophy: assume unknown states are valuable until proven otherwise.

class OptimisticScout(Scout): def __init__(self, optimism: float = 10.0): self.optimism = optimism def initial_q_value(self): return self.optimism # Instead of 0How it works:

- Initialize all Q-values to a high value (e.g., 10.0)

- The agent is “optimistic” about unvisited state-action pairs

- As it explores and receives actual rewards, Q-values decay toward reality

The intuition: If you’ve never tried something, assume it might be great. This drives exploration without explicit novelty bonuses.

Strategy Comparison

Strategy Mechanism Best For Random Uniform random actions Baseline, maximum coverage Epsilon-Greedy Random with probability ε, greedy otherwise Balancing exploit/explore CuriousScout Novelty bonus for unvisited states Systematic coverage OptimisticScout High initial Q-values Early exploration pressure The Diversity Experiment

Does mixing strategies help, or is it enough to have multiple scouts with the same good strategy?

Setup

- 7x7 sparse grid, 100 training episodes

- All configurations use exactly 5 scouts (fair comparison)

- 5 random seeds for statistical significance

Configurations

- Homogeneous Random: 5 identical random scouts

- Homogeneous Epsilon: 5 identical epsilon-greedy scouts (ε=0.2)

- Diverse Mix: Random + 2 epsilon-greedy (ε=0.1, 0.3) + CuriousScout + OptimisticScout

Results

Configuration Success Rate Random baseline 7% Homogeneous random 20% Homogeneous epsilon 40% Diverse mix 40% Analysis

Finding: Strategy quality matters more than diversity in simple environments.

- Epsilon-greedy (homogeneous or mixed) outperforms pure random

- Diverse mix performs the same as homogeneous epsilon-greedy

- Having 5 good scouts beats having 5 diverse but weaker scouts

Why doesn’t diversity help here?

In a simple 7x7 grid, the exploration problem is primarily about coverage, not strategy complementarity. Five epsilon-greedy scouts with different random seeds already explore different regions due to stochastic action selection.

Diversity likely provides more benefit in:

- Complex environments with multiple local optima

- Tasks requiring different behavioral modes

- Environments with deceptive reward structures

Web Visualization

The web visualization demonstrates Many-Eyes Learning with real-time parallel scout movement. (The upcoming video walks through this demo—the post focuses on the underlying mechanism.)

How It Works

The web version uses Q-learning with a shared Q-table (simpler than DQN for clarity). All scouts contribute to the same Q-table—the core “many eyes” concept: more explorers = faster Q-value convergence.

Scout Role Epsilon Behavior Random Baseline 1.0 (constant) Always random, never follows policy Scouts 1-N Learning Agents 0.5-0.8 → 0.01 Epsilon-greedy with decay Exploration Modes

The UI provides a dropdown to select different exploration strategies:

Mode Heatmap Diversity Learning Performance Shared Policy Low (identical paths) Best (lowest avg steps) Diverse Paths High (distinct paths) Worse (biases override optimal) High Exploration High Worst (never fully exploits) Boltzmann Moderate Moderate The Diversity vs Performance Trade-off

There’s a fundamental trade-off between visual diversity and learning performance:

-

Shared Policy wins on performance: The “many eyes” benefit comes from diverse exploration during learning (finding the goal faster). But once Q-values converge, all scouts should follow the same optimal policy.

-

Diverse Paths sacrifices performance for visuals: Scout-specific directional biases (Scout 1 prefers right, Scout 2 prefers down) create visually interesting heatmaps but suboptimal behavior.

-

High Exploration never converges: Fixed 50% random actions means scouts never fully exploit the learned policy.

Key insight: For best learning, use Shared Policy. Use other modes to visualize how different exploration strategies affect the learning process, but expect higher average steps.

Learning Phases

Phase Episodes Avg Steps Behavior Random 1-5 ~70 All scouts exploring randomly Early Learning 5-15 40-60 Policy starts forming Convergence 15-30 15-25 Clear optimal path emerges Stable 30+ 12-18 Near-optimal with random scout noise Why “Average Steps to Goal”?

Success rate is coarse-grained—with 5 scouts, only 6 values are possible (0%, 20%, 40%, 60%, 80%, 100%). After ~10 episodes, all scouts typically reach the goal. Average steps shows continued policy refinement, dropping from ~70 (random) to ~8 (optimal).

Running the Visualization

./scripts/serve.sh # Open http://localhost:3200- Yew/WASM frontend with FastAPI backend

- Speed control from 1x to 100x

- Replay mode to step through recorded training

What’s Next

Potential future directions:

Direction Why It Matters Larger environments Test scaling to 15x15, 25x25 grids Scout communication Real-time sharing vs passive pooling Adaptive intrinsic rewards Learn the reward function (closer to full IRPO) Multi-goal environments Multiple sparse rewards to discover Key Takeaways

-

Intrinsic rewards drive exploration. CuriousScout and OptimisticScout implement different philosophies: novelty bonuses vs optimistic initialization.

-

Strategy quality > diversity in simple environments. Five good scouts beat five diverse but weaker scouts.

-

Diversity during learning, convergence after. The “many eyes” benefit comes from diverse exploration during learning. Once Q-values converge, all scouts should follow the same optimal policy.

-

Shared Q-table enables collective learning. All scouts contribute to one Q-table—more explorers means faster convergence.

-

Visual diversity costs performance. Modes like “Diverse Paths” create interesting heatmaps but suboptimal behavior. Use “Shared Policy” for best learning results.

References

Concept Paper IRPO Intrinsic Reward Policy Optimization (Cho & Tran 2026) Reagent Reasoning Reward Models for Agents (Fan et al. 2026) ICM Curiosity-driven Exploration (Pathak et al. 2017)

Diverse exploration, convergent execution. Many eyes see more, but the best path is the one they all agree on.

-

592 words • 3 min read • Abstract

How AI Learns Part 1: The Many Meanings of Learning

When people say, “AI learned something,” they usually mean one of at least four very different things.

Large Language Models (LLMs) do not learn in one single way. They learn at different time scales, in different locations, and with very different consequences. To understand modern AI systems—especially agents—we need to separate these layers.

Resource Link Related ICL Revisited | RLM | Engram Four Time Scales of Learning

Learning happens at different layers with different persistence and speed. 1. Pretraining (Years)

This is the foundation.

The model trains on massive datasets using gradient descent. The result is a set of weights—billions of parameters—encoding statistical structure of language and knowledge.

This learning:

- Is slow and expensive

- Persists across restarts

- Cannot easily be reversed

- Is vulnerable to interference if modified later

Think of this as long-term biological memory.

2. Fine-Tuning (Days to Weeks)

Fine-tuning modifies the weights further, but with narrower data.

This includes:

- Instruction tuning (following directions)

- Alignment methods (Reinforcement Learning from Human Feedback (RLHF), Direct Preference Optimization (DPO))

- Domain adaptation

- Parameter-efficient methods like Low-Rank Adaptation (LoRA)

This is still weight-based learning.

It persists across restarts. It risks catastrophic forgetting. It modifies the brain itself.

3. Memory-Based Learning (Seconds to Minutes)

This is where many modern systems shift.

Instead of changing weights, they store information externally:

- RAG (Retrieval-Augmented Generation)

- CAG (Cache-Augmented Generation)

- Vector databases

- Engram-style memory modules

The model retrieves relevant memory per query.

The brain stays stable. The notebook grows.

This learning:

- Persists across restarts

- Survives model upgrades

- Does not cause forgetting

- Is fast

4. In-Context Learning (Milliseconds)

This is temporary reasoning scaffolding.

Information exists only in the prompt window.

It:

- Does not update weights

- Does not persist across sessions

- Is powerful but fragile

- Suffers from context rot

This is working memory.

Why This Matters

Most discussions collapse all of this into “the model learned.”

But:

- Updating weights risks forgetting

- Updating memory does not

- Updating prompts does not persist

- Updating adapters can be modular and reversible

Continuous learning systems must coordinate all four.

Persistence Comparison

Mechanism Persists Across Chat? Persists Across Restart? Persists Across Model Change? Pretraining Yes Yes No Fine-tune Yes Yes No LoRA Yes Yes Usually Distillation Yes Yes No ICL No No No RAG Yes Yes Yes Engram Yes Yes Yes CAG Yes Yes Yes That last column is subtle but powerful for agents.

References

Concept Paper LoRA LoRA: Low-Rank Adaptation of Large Language Models (Hu et al. 2021) RAG Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks (Lewis et al. 2020) ICL What Can Transformers Learn In-Context? (Garg et al. 2022) Engram Engram: Conditional Memory via Scalable Lookup (DeepSeek 2025) DPO Direct Preference Optimization (Rafailov et al. 2023) Coming Next

In Part 2, we’ll examine the two fundamental failure modes that arise from confusing these layers: catastrophic forgetting and context rot.

Learning happens in layers of permanence.

-

1173 words • 6 min read • Abstract

music-pipe-rs: Unix Pipelines for MIDI Composition

After building midi-cli-rs for quick mood-based generation, I wanted something more surgical. What if music generation worked like Unix commands—small tools connected by pipes?

Resource Link Code music-pipe-rs Related midi-cli-rs Next Web Demo and Multi-Instrument The Unix Philosophy for Music

Most generative music tools are monolithic. You get one application with a closed workflow. If you want to inspect intermediate results, you can’t. If you want to swap one transformation for another, you rebuild everything.

Unix solved this decades ago: small tools that do one thing well, connected by pipes. Each tool reads from stdin, writes to stdout. You can inspect any point in the pipeline with

head, filter withgrep, transform withjq.music-pipe-rs applies this philosophy to MIDI composition.

A Pipeline in Action

seed 12345 | motif --notes 16 --bpm 120 | humanize | to-midi --out melody.midFour stages:

- seed establishes the random seed for the entire pipeline

- motif generates a melodic pattern (using the pipeline seed)

- humanize adds timing and velocity variation (using the same seed)

- to-midi converts the event stream to a standard .mid file

The output plays in any DAW.

Seed-First Architecture

The

seedstage goes at the head of the pipeline:# Explicit seed for reproducibility seed 12345 | motif --notes 16 | humanize | to-midi --out melody.mid # Auto-generated seed (printed to stderr) seed | motif --notes 16 | humanize | to-midi --out melody.mid # stderr: seed: 1708732845All downstream stages read the seed from the event stream. No

--seedarguments scattered across the pipeline. One seed, set once, used everywhere.This means:

- Same seed = identical output across all random stages

- Different seed = different composition with same structure

- Reproducibility is trivial: just save the seed number

JSONL: The Intermediate Format

Between stages, events flow as JSONL (JSON Lines). Each line is a complete event:

{"type":"Seed","seed":12345} {"type":"NoteOn","t":0,"ch":0,"key":60,"vel":80} {"type":"NoteOff","t":480,"ch":0,"key":60}This format is human-readable and tool-friendly:

# See the first 10 events seed 42 | motif --notes 8 | head -10 # Count how many NoteOn events seed 42 | motif --notes 16 | grep NoteOn | wc -l # Pretty-print with jq seed 42 | motif --notes 4 | jq .No binary formats to decode. No proprietary protocols. Just text.

Visualization with viz

The

vizstage prints a sparkline to stderr while passing events through:seed 12345 | motif --notes 16 | viz | humanize | to-midi --out melody.midOutput on stderr:

▃▅▇▅▃▁▂▄▆▇▆▄▂▁▃▅For more detail, use piano roll mode:

seed 12345 | motif --notes 16 | viz --rollG6 │···█············│ F#6 │·····█··········│ F6 │····█···········│ G5 │·██·········█···│ F5 │···········█····│ E5 │·········██···█·│ C5 │█·····███····█·█│The visualization goes to stderr; the JSONL events continue to stdout. You can inspect the music without breaking the pipeline.

Available Stages

Stage Type Description seedStart Establish random seed for pipeline motifGenerate Create melodic patterns euclidGenerate Euclidean rhythm generation transposeTransform Shift notes by semitones scaleTransform Constrain notes to a scale humanizeTransform Add timing/velocity variation vizInspect Print sparkline visualization to-midiOutput Convert to .mid file Each stage is a separate binary. Mix and match as needed.

Euclidean Rhythms

The

euclidstage generates Euclidean rhythms—mathematically optimal distributions of hits across steps:# 3 hits distributed across 8 steps (Cuban tresillo) seed | euclid --pulses 3 --steps 8 --note 36 | to-midi --out kick.mid # 4-on-the-floor kick pattern seed | euclid --pulses 4 --steps 16 --note 36 | to-midi --out four-floor.midThese patterns appear in music worldwide because they “feel right”—the spacing is as even as possible.

Scale Locking

The