Five ML Concepts - #14

453 words • 3 min read • Abstract

5 machine learning concepts. Under 30 seconds each.

| Resource | Link |

|---|---|

| Papers | Links in References section |

| Video | Five ML Concepts #14 |

| Comments | Discord |

References

| Concept | Reference |

|---|---|

| ROC/AUC | An Introduction to ROC Analysis (Fawcett 2006) |

| Spurious Correlations | Unbiased Look at Dataset Bias (Torralba & Efros 2011) |

| Gradient Clipping | On the Difficulty of Training Recurrent Neural Networks (Pascanu et al. 2013) |

| Loss Landscapes | Visualizing the Loss Landscape of Neural Nets (Li et al. 2018) |

| Cold Start | Addressing Cold Start in Recommender Systems (Schein et al. 2002) |

Today’s Five

1. ROC / AUC

ROC curves plot true positive rate against false positive rate across all classification thresholds. AUC (Area Under the Curve) summarizes overall ranking performance in a single number.

AUC of 0.5 means random guessing; 1.0 means perfect ranking.

Like judging a student by considering every possible passing grade cutoff.

2. Spurious Correlations

Coincidental patterns in training data that don’t reflect true relationships. Models that rely on them can fail when the coincidence disappears.

Dataset curation and diverse evaluation help detect spurious features.

Like assuming umbrellas cause rain because you always see them together.

3. Gradient Clipping

Limiting the size of gradients during backpropagation. This helps prevent exploding gradients and unstable training, especially in recurrent networks.

Clipping can be by value or by global norm.

Like putting a speed limit on a car so it doesn’t lose control.

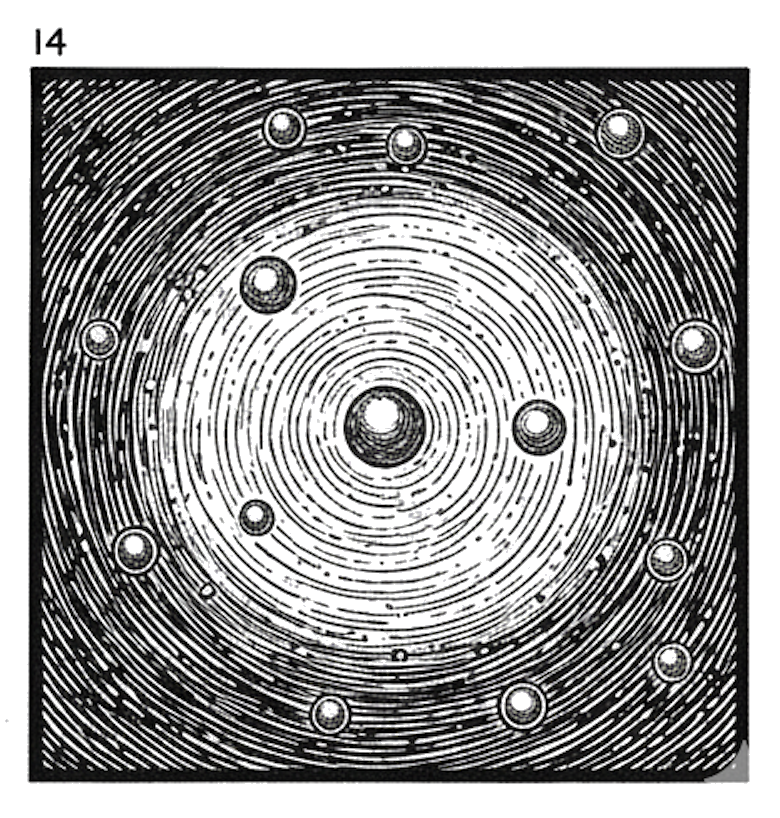

4. Loss Landscapes

How model error changes across different parameter settings. Training is like navigating this surface toward regions of lower loss.

Flat minima may generalize better than sharp ones.

Like hiking through mountains searching for the lowest valley, feeling the slope beneath your feet.

5. Cold Start Problems

Difficulty predicting for new users or items with no history. Without prior data, personalization becomes difficult.

Solutions include content-based features, popularity fallbacks, or asking initial questions.

Like a librarian trying to recommend books to someone who just walked in.

Quick Reference

| Concept | One-liner |

|---|---|

| ROC / AUC | Classifier performance across thresholds |

| Spurious Correlations | Coincidental patterns that don’t generalize |

| Gradient Clipping | Limit gradient size for stability |

| Loss Landscapes | Error surface over parameter space |

| Cold Start | No history for new users/items |

Short, accurate ML explainers. Follow for more.

Part 14 of the Five ML Concepts series. View all parts | Next: Part 15 →

Comments or questions? SW Lab Discord or YouTube @SoftwareWrighter.